ARC-Encoders

Context Compression, Encoder Decoder, LLMs

This project was carried out during my pre-thesis short-term contract at Kyutai, under the supervision of Edouard Grave and Patrick Pérez.

Abstract

Recent advances such as retrieval-augmented generation and chain-of-thought reasoning have led to longer contexts and higher inference costs in large language models (LLMs). Context compression can mitigate these costs, but many of the most effective approaches require fine-tuning or architectural changes, which may harm general performance outside their intended use.

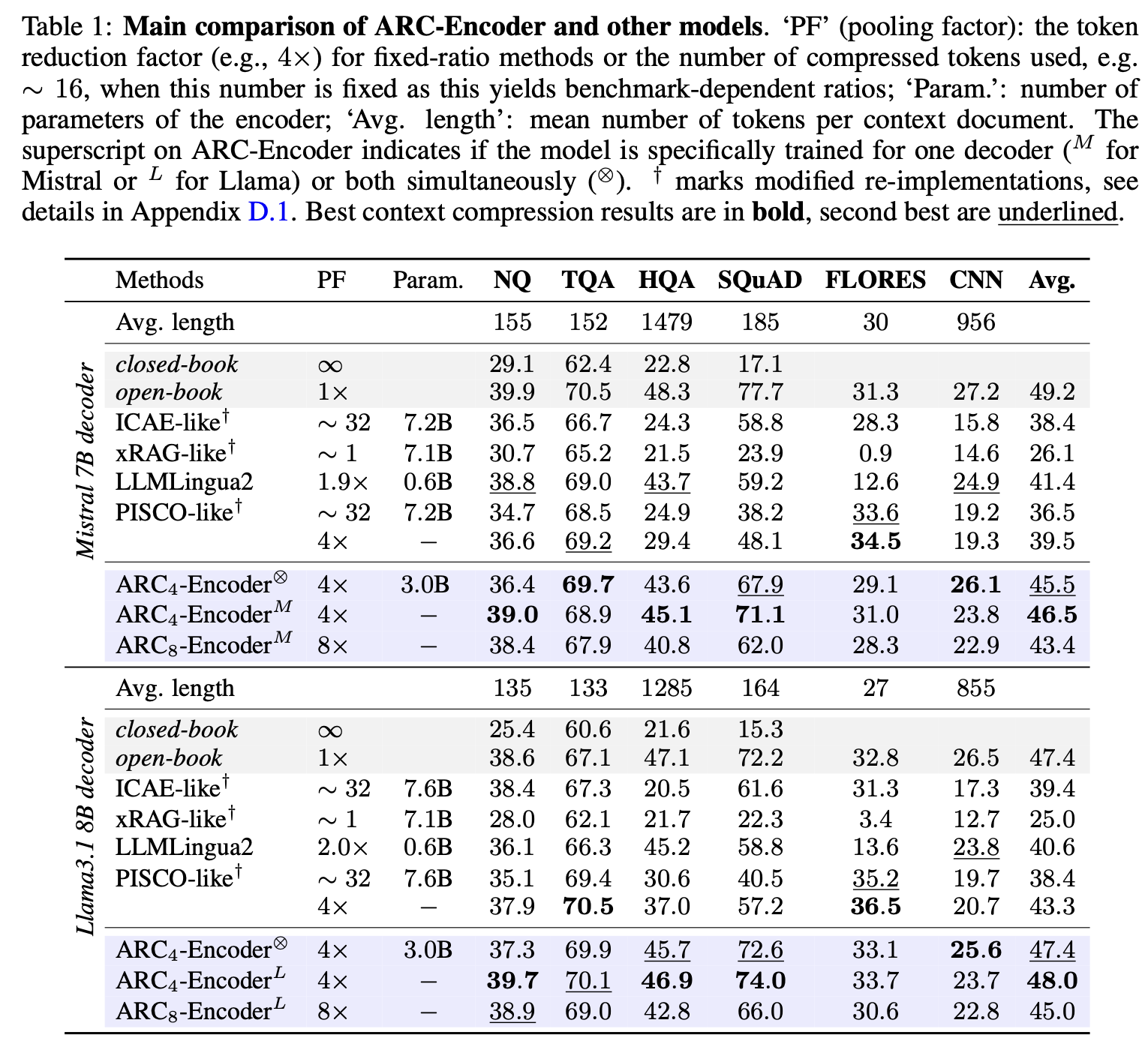

In this work, we propose an alternative: an encoder that compresses context into continuous representations serving as replacements for token embeddings in decoder LLMs. We systematically study training strategies and architectural choices for such encoders, leading to the design of the Adaptable text Representations Compressor (ARC-Encoder). ARC-Encoder produces x-times fewer continuous representations than text tokens (typically x in {4, 8}).

We evaluate ARC-Encoder across diverse LLM usage scenarios—from in-context learning to context extension—on both instruction-tuned and base decoders. Results show that ARC-Encoder achieves state-of-the-art performance on several benchmarks while improving inference efficiency. Moreover, we demonstrate that a single encoder can be adapted to multiple decoders, enabling cross-LLM generalization. This makes ARC-Encoder a flexible and efficient solution for portable, multi-decoder-compatible encoders.

We release the training code, fine-tuning dataset, and pretrained models.

Results

ARC-Encoders deliver more than 1.5× computational gains while maintaining strong performance across various benchmarks. We further highlight their versatility by training encoders for multiple decoders—Llama 3.1 8B, Mistral 7B, and a shared encoder for both—as well as a Llama 2 7B Chat version to illustrate context-extension capabilities.

Authors

Hippolyte Pilchen, Edouard Grave, and Patrick Pérez.

Feel free to reach out if you have any questions — contact details are provided in the paper.